Hiya. Can I filter which scattergeo text labels show based on criteria ‘count’ count column is greater than or equal to a value for example 34.

Alternately, can I pass a specific list of ‘state_code’ abbreviations to label?

I want only the states with the highest counts to be labeled with their counts, in other words hide the count labels on states except California, Texas, New York.

# make a minimal dataframe

data = [['CA', 42], ['NY', 41], ['TX', 34], ['FL', 31], ['PA', 26]]

dfdemo_map = pd.DataFrame(data, columns=['State_code', 'count'])

# plot a choropleth with color range by count per state

fig = px.choropleth(dfdemo_map,

locations='State_code',

locationmode="USA-states",

scope="usa",

color='count',

color_continuous_scale="Oranges",

)

# center the title

fig.update_layout(title_text='How Many Survey Respondents from each State', title_x=0.5)

# label states with count

fig.add_scattergeo(

locations=dfdemo_map['State_code'],

locationmode="USA-states",

text = dfdemo_map['count'],

featureidkey="properties.NAME_3",

mode = 'text',

textfont=dict(

family="helvetica",

size=24,

color="white"

))

fig.show()

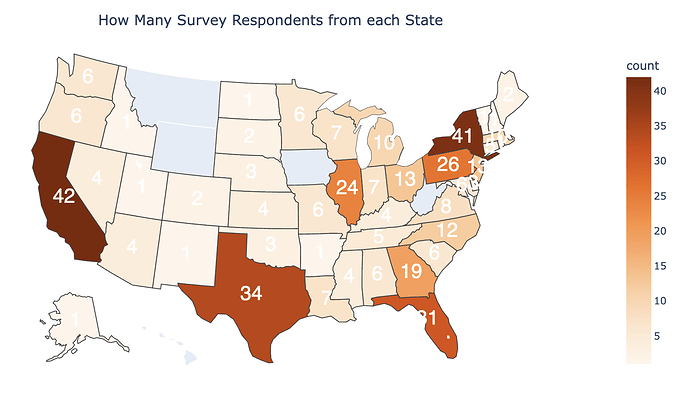

my map currently looks like this.